Blog Summary of the TFGBV Policy Dialogue Series – Session 6

For our final session in the Policy Dialogue Series—a joint initiative of UN Women and the SVRI Community of Practice on TFGBV—we examined the risks of generative AI. Rather than viewing these risks as purely technological challenges, the discussion underscored their nature as socio-technical problems. You can now watch the recording here.

As moderator Alexandra Robinson (UNFPA) stated, these systems are “shaped by the histories of colonisation, patriarchy, racial capitalism, and global inequalities.” To help define this emerging phenomenon, Alex offered a useful framework distinguishing between two dominant forms of AI. Predictive AI forecasts outcomes from existing data, while generative AI creates new content from learned patterns. When used by malicious actors on platforms lacking robust governance, these technologies operate as a coordinated TFGBV attack system: predictive AI acts as a scout, identifying and selecting targets, while generative AI functions as a factory, mass-producing weapons of abuse.

We can demand safety by design, not as an add-on, but as a minimum viable product requirement. And we can invest in feminist technologies, Global South research, and participatory innovation ecosystems. It’s also important to treat TFGBV as a core AI governance issue, and not a side effect.” – Alexandra Robinson

Although AI systems are often perceived as neutral, they reproduce the biases embedded in their training data as well as those of their creators. The consequences are not merely symbolic but extend far beyond the digital sphere, enabling physical stalking, coercion, blackmail, job loss, withdrawal from public life, and in the most extreme cases, femicide.

A core challenge for research and coordinated responses to TFGBV is the speed at which new forms of violence emerge. Tools originally designed to help can quickly be repurposed for harm, while response systems often struggle to act with the urgency required. Despite solid structural understandings of violence, international and national TFGBV frameworks, and recognition of the particular vulnerabilities of specific groups and online misogyny, there remains an urgent need to address unfamiliar and rapidly evolving forms of abuse.

Addressing this multifaceted socio-technical challenge requires integrating a Feminist AI lens—one that actively counters gender bias and embeds safety by design, not as an optional add-on but as a foundational requirement.

Alex opened the panel to a multi-stakeholder dialogue, drawing on perspectives from the Global South, government, civil society, and the research community to illuminate the interconnected challenges and pathways for mitigation.

The Global South imperative: Responding to the digital and linguistic challenges

Israel Olatunji Tijani, ICT4D expert and Founder of ChatVE, emphasised that solutions created in the Global North frequently fail in the Global South due to structural disconnects from local realities. Digital access remains the primary challenge, compounded by multiple intersecting barriers.

This digital divide creates a critical flaw in current AI moderation systems. Perpetrators exploit linguistic gaps, evading filters trained primarily on English. They increasingly use local languages to target women in politics.

In response, Israel’s organisation is implementing bottom-up strategies, including compiling TFGBV lexicons in African languages—Swahili, Hausa, Yoruba—to train more culturally and linguistically competent AI systems.

A significant innovation he highlighted is the “The51% AI Bot” tool, grounded in the political principle that women are not a minority. The tool aims to make complex legal and policy frameworks accessible and inclusive in everyday language.

We built The51% AI Bot to make sure that women can easily understand in everyday languages the instruments and frameworks available to them to protect themselves [from TFGBV].” – Israel Olatunji Tijani

A governmental approach: Balancing innovation, regulation, and cooperation

Governments must reconcile the promise of AI-driven innovation with their obligation to protect citizens and uphold human rights. Rachel Grant, Policy Lead for Online Safety in Digital Development of the UK’s Foreign, Commonwealth and Development Office outlined a dual approach grounded in these priorities.

The UK Online Safety Act exemplifies a regulatory model rooted in safety by design and technological neutrality, engaging across borders to address a threat that is inherently transnational. The framework aims to manage risk proactively while enabling innovation.

Grant also highlighted the lack of public awareness and media literacy regarding TFGBV. Many people normalize deepfakes or the non-consensual distribution of intimate content, underscoring the need for public education campaigns—particularly concerning AI-generated sexual images of women and girls.

The use of generative AI is a new and emerging area for all of us. We’re learning as we go. There is a clear recognition of the scale and urgency of the challenge. We have a way to go to get that right, including in how we work with private sector tech companies.” – Rachel Grant

A key achievement, she noted, is the growing prominence of gender-focused perspectives in international cooperation agendas advocating for “human-centric, trustworthy, and responsible AI.” A practical example is the FCDO-funded StopNCII.org, an AI-powered platform for detecting and removing non-consensual intimate images at scale.

Her intervention demonstrates that cross-sector collaboration is indispensable for addressing emerging harms and crafting innovative protection mechanisms.

Redesigning power through feminist AI

From the civil society perspective, Marina Meira, Public Policy Coordinator at Derechos Digitales, emphasized that TFGBV is not a new problem created by technology but a continuation of offline gendered violence. AI does not emerge in a vacuum; it absorbs and amplifies inequalities rooted in patriarchal and extractive systems. Therefore, the task is not merely to mitigate harm but to redistribute power.

“We need to have survivor-centred laws (…) These approaches are non-negotiable, and we need to have them from an intersectional perspective, so women, LGBTQA+ groups, racialised communities, they must not only be consulted, they must hold real power in design, evaluation, and governance, so we need to push also for capacity building within these groups.” – Marina Meira

Feminist AI requires empowering marginalised communities in design and oversight, establishing gender-transformative approaches, and enforcing platform and developer obligations. This includes training data transparency, independent safety audits that scrutinise design choices and business models—not just model outputs—and robust, gender-sensitive redress mechanisms.

This reframes the conversation from mitigating harm within an exclusionary system to reimagining technology in service of an inclusive society.

The research perspective: Evidence, awareness, and the ‘chilling effect’

Selam Abdella, researcher at the Global Center on AI Governance, highlighted a structural barrier: widespread public unfamiliarity with the term TFGBV. A recent South African study found that students across four universities had little awareness of the concept. This gap fuels underreporting and under-researching, undermining recognition of TFGBV as a serious crime.

One of the most damaging impacts documented is the chilling effect—the self-censorship and withdrawal from public spaces caused by persistent threats of misogynistic abuse. This intersects with the paradox of exposure: expanding digital access is essential, yet increased online presence heightens risks, particularly for activists and educators.

Studies highlight the proliferation of readily available deepfake tools designed for non-consensual intimate content and the use of AI-generated material to undermine women in politics during elections.

Yet research also shows resilience: for example, Black Brazilian women using digital spaces to advance activism and counter technological violence.

Selam emphasised three key priorities:

- Definition and Research: Continuously documenting emerging forms of TFGBV.

- Enforceable Regulation: Ensuring robust implementation of rights-protective frameworks.

- Collaboration: Building coordinated efforts among researchers, policymakers, advocates, and affected communities.

“Researchers, policymakers, and advocates, and especially women themselves, or gender minorities as well, need to come together to understand what kinds of violence folks are facing online, and how to combat it and ensure that there’s redressing.” – Selam Abdella

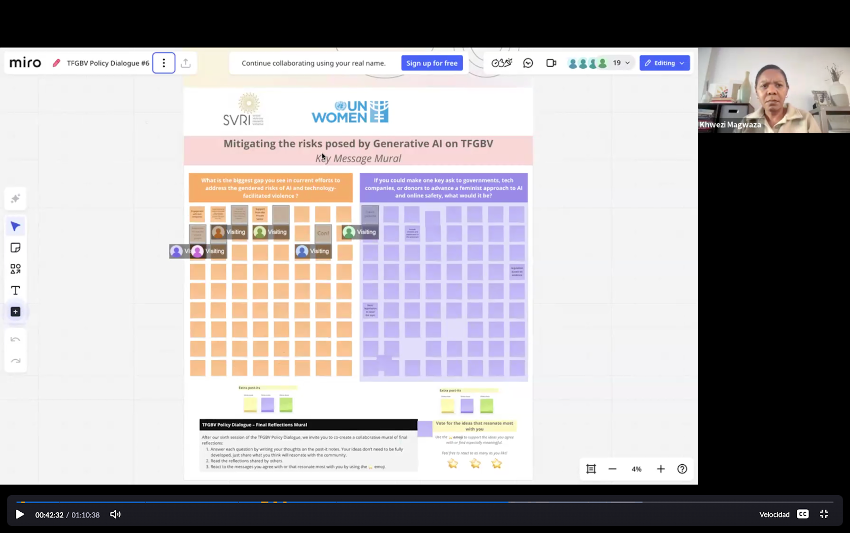

A unified framework for action and co-creation messages

The overarching conclusion from the panel is that mitigating the harms of Generative AI is not merely a technical challenge, but fundamentally a question of power. As Alex summarised: “…we’re all in the game of shifting the power of AI back to the people and creating a world that we want, instead of amplifying the world that we have.” Achieving this vision requires a unified, deliberate, and sustained effort across all sectors of society.

The panel emphasised the inclusion of diverse stakeholders, underscoring that collaborative action, systemic accountability, and the presence of survivors and marginalised communities—particularly from the Global South—are essential across all stages of AI design, deployment, and governance. Likewise, for preventing and responding to structural inequality, the exercise highlighted the importance of promoting digital and AI literacy to empower the public to recognise and counter technology-facilitated harms.

Complementarily, the Miro inputs emphasised:

- Need for stronger, feminist, evidence-based regulation and basic TFGBV legislation.

- Importance of clear referral pathways and support systems for survivors.

- Co-creation of culturally and linguistically responsive AI tools and moderation practices.

- Expansion of digital and AI literacy to empower the public and key decision-makers.

Previous explainers

- Staying aligned with global standards and commitments on TFGBV (12 June 2025) – Read the blog

- Actioning global standards and commitments on TFGBV at national level (17 July 2025) – Read the blog

- Mitigating online violence against women human rights defenders (14 August 2025) – Read the blog

- Engaging the manosphere in efforts to prevent TFGBV (18 September 2025) – Read the blog

- Safety by design, online content moderation & community management (16 October 2025) – Read the blog

Additional resources

- Hawkings, W (07 May, 2025). New Oxford study uncovers explosion of accessible deepfake AI image generation models intended for the creation of non-consensual, sexualised images of women.

Weaver, M. (25 November, 2025). One in four unconcerned by sexual deepfakes created without consent, survey finds.

This blog was written by Alexandra De Fillippo and Khwezi Magwaza, Facilitators of the TFGBV CoP, and Andrea Chavez.